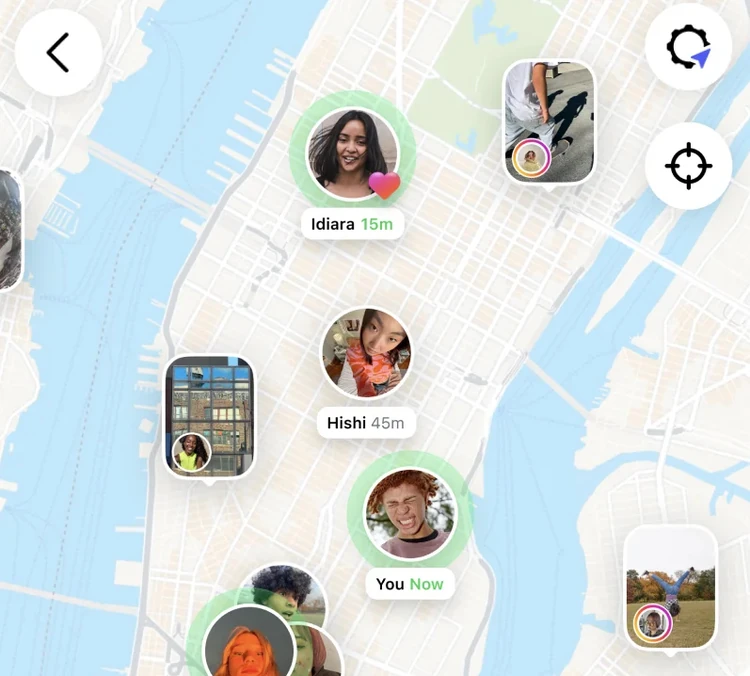

- White House says US companies will oversee TikTok’s algorithm and Americans will hold six of seven board seats for US operations.

Oracle, chaired by Trump ally Larry Ellison, will lead data and privacy protections.

Trump and Xi discussed TikTok’s future, but Beijing has not confirmed approval of the deal.

White House signals breakthrough in talks

The White House announced over the weekend that US companies will take control of TikTok’s algorithm and that six of seven board seats in the app’s US operations will be held by Americans. Press secretary Karoline Leavitt said a deal could be signed “in the coming days,” though Chinese officials have yet to comment publicly.

Speaking on the Fox News program “Saturday in America,” Ms. Leavitt said that “we are 100 percent confident that a deal is done,” but added in the same breath that the deal had not yet been signed, the New York Times reported. She said that could happen in the coming days.

The move follows years of negotiations over whether TikTok could continue to operate in the United States amid concerns over its Chinese parent company, ByteDance. The app had previously faced the threat of a ban unless its US business was sold.

Oracle to oversee data and privacy

Leavitt said that US tech giant Oracle will lead TikTok’s US data and privacy safeguards. Oracle’s founder and chair, Larry Ellison—long a political ally of President Trump—will play a central role.

“The data and privacy will be led by one of America’s greatest tech companies, Oracle, and the algorithm will also be controlled by America as well,” Leavitt told Fox News. She added that “all of those details have already been agreed upon,” with only a final signature needed to seal the deal.

The Ellison family has gained growing influence in US media, with Larry Ellison’s son, David, recently acquiring Paramount, owner of CBS News.

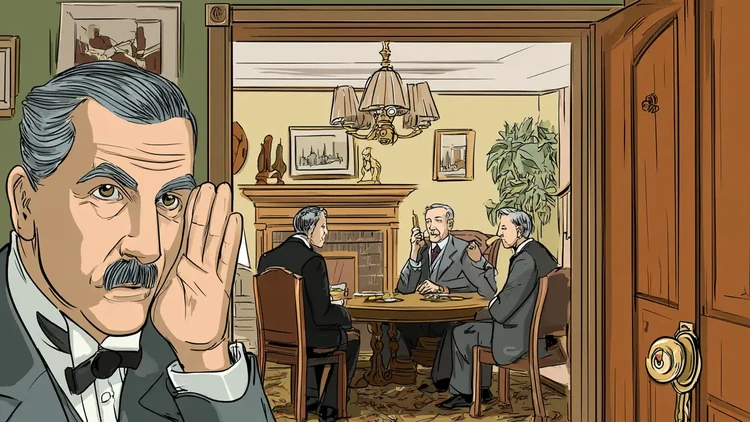

Mixed signals from China

President Trump said he and Chinese President Xi Jinping discussed TikTok in a phone call and both approved the deal. He described the exchange as “productive” in a Truth Social post.

But Beijing’s response has been less clear. China’s Commerce Ministry said it welcomed negotiations “in accordance with market rules” and emphasized that any solution must comply with Chinese law. State news agency Xinhua quoted Xi as welcoming talks, without confirming a final agreement, according to a BBC report.

Dispute over the algorithm

A major sticking point in negotiations has been who controls TikTok’s powerful recommendation algorithm, which shapes content for its 170 million American users. While Trump sidestepped questions about whether a new algorithm would be needed, the White House has now insisted that control will rest firmly in US hands.

Legal and political backdrop

In January, the US Supreme Court upheld a 2024 law banning TikTok unless ByteDance divested from its US operations. The app briefly went offline before the deadline was pushed back. Trump, who initially called for TikTok to be banned during his first term, shifted course in 2024 and embraced the platform to reach younger voters in his presidential campaign.

The Justice Department has previously warned that TikTok posed a national security threat of “immense depth and scale,” citing concerns about user data access.